StarQuest Technical Documents

SQDR Plus: Setting up a Windows Failover Cluster

Last Update: 30 June 2021

Product: SQDR Plus

Version: 4.50 and later

Article ID: SQV00PL038

Abstract

Windows Server Failover Clustering (WSFC) is a feature of the Windows Server platform for improving the high availability (HA) of applications and services. It is included in all editions of Windows Server 2012 and later, and in Enterprise and Datacenter editions of Windows Server 2008R2.

This document describes how to configure Windows, IBM Db2 LUW (Linux, UNIX & Windows), SQDR Plus, and SQDR to provide high availability for the StarQuest Data Replicator solution. The procedure below was performed using VMWare ESXi 6.5, Windows Server 2016 Standard Edition, and Db2 11.1 Workgroup Edition. The procedure may vary if you are using a different virtualization solution or a different version or edition of Windows or Db2.

We recommend that you download and complete the SQDR Plus Cluster Checklist (XLSX format) as you perform the procedure.

Solution

- UserIDs

- Other Prerequisites

- VM Setup - "Cluster in a box", using shared virtual disks

- Add Failover Clustering Role to Windows

- Firewall

- Create the Cluster

- Add the two shared drives and validate the cluster

- Test the Cluster

- Install Db2 on both systems

- Create the clustered Db2 instance on one system

- Adding Db2 Role to the cluster using db2mscs

- Test Db2 Failover

- Set the DB2INSTANCE environment variable and reboot

- Install StarQuest products on node 1

- Install StarQuest Products on node 2

- Configure SQDR and SQDR Plus on node 1

- Verify SQDR and SQDR Plus configuration on node 2

- Create cluster role for SQDR Service

- Testing the SQDR Role

- Create and test roles for SQDR Plus services

- Updating StarQuest Products

- Updating Db2 and Windows

- Troubleshooting

- References

UserIDs

It is important to understand the functions of each of the following userID's and their needed authorities.

Setup user: The setup of the Windows cluster should be performed on the two nodes of the cluster by a domain user (e.g. mydomain\clustadm) who is a member of the local Administrators group on both systems, and has sufficient authorities on the domain to create a computer (add an OU entry) in the Active Directory. The authorities needed are Create Computer objects and Read All Properties permissions in the container that is used for computer accounts in the domain (typically the container named Computers).

The domain administrator should perform the following steps to create the setup user and grant it the necessary authorities:

- Open Active Directory Users and Computers

- Create the domain user e.g. clustadm

- Right click on Computers and select Delegate Control...

In the Delegation of Control Wizard:

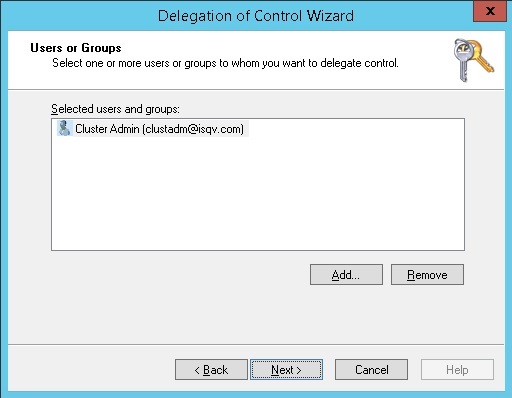

- On the Users or Groups panel, add the user and select Next.

- On the Tasks to Delegate panel, select Create a custom task to delegate and select Next.

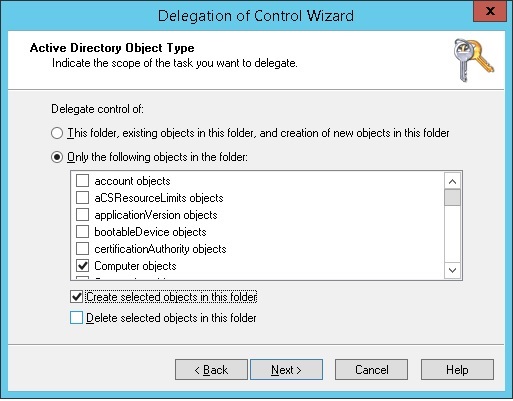

- On the Active Directory Object Type panel, select Computer objects and Create selected objects in this folder. Select Next.

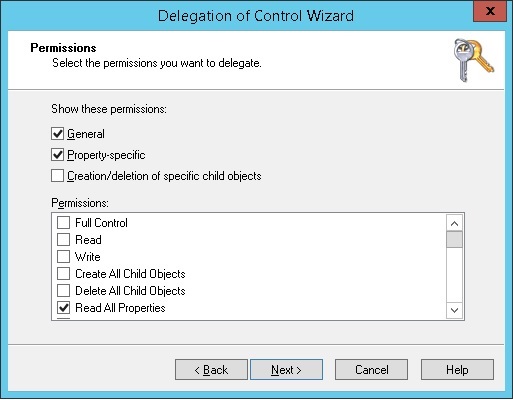

- On the Permissions panel, select Read All Properties. Select Next.

- Select Finish.

db2admin: The Db2 services will be run as a domain user e.g. mydomain\db2admin who is a member of the local Administrators group on both systems.

sqdr: a local user named sqdr should be created on both systems, with the same password, for use by the SQDR and SQDR Plus applications. This user does not need any special authorities, but it must be named sqdr and we recommend that its password be set to non-expiring.

After the domain users (mydomain\clustadm and mydomain\db2admin) have been created, add these users to the local Administrators group of both systems. All of the remaining tasks will be performed while logged on to the 2 virtual machines as mydomain\clustadm.

Other Prerequisites

- Be sure that the address pool on your DHCP server (i.e. your domain controller) has at least six available addresses for use as the Client Access Points for each of the five cluster roles that will be created, plus one for the cluster itself.

- OR - if you do not have a DHCP server, assign six static IP addresses for use as the Client Access Points, and add them to your DNS server (to prevent the accidental use of these addresses for another purpose and to enable access to the services from other machines).

VM Setup - "Cluster in a box", using shared virtual disks

In order for a failover cluster to work, each server's hardware specifications must be the same and the servers must share storage. The two servers communicate through a series of "heartbeat" signals over a dedicated network, and they use a shared disk for application data such as Db2 databases and the SQDR Plus configuration information.

For our test setup, we created a cluster of two virtual machines on the same ESXi host (also known as "cluster in a box"), using shared storage and an internal network provided by that host. This configuration protects against failures at the operating system and application level and eliminates downtime during system updates, but it does not protect against hardware failures.

A cluster of virtual machines across physical hosts (a "cluster across boxes") protects against software failures and hardware failures on the physical machine by placing the cluster nodes on separate ESXi hosts. This configuration requires a private network and shared storage such as a Fibre Channel SAN, FCoE, or iSCSI.

See the VMWare document Setup for Failover Clustering and Microsoft Cluster Service (VMware vSphere 6.5) for details about cluster in a box and cluster across boxes.

We created two identical Windows Server 2012R2 VM's on the same VMWare ESXi host, joined to a Windows domain. Each VM has 2 local disks (each 40gb, thick provision eager-zero), C: for the Windows system and D: for Program Files.

Each VM has 2 network interfaces (type vmxnet3), with one NIC connected to the main network (using a DHCP address) and the other to a private network within the VMWare host (using a static IP address).

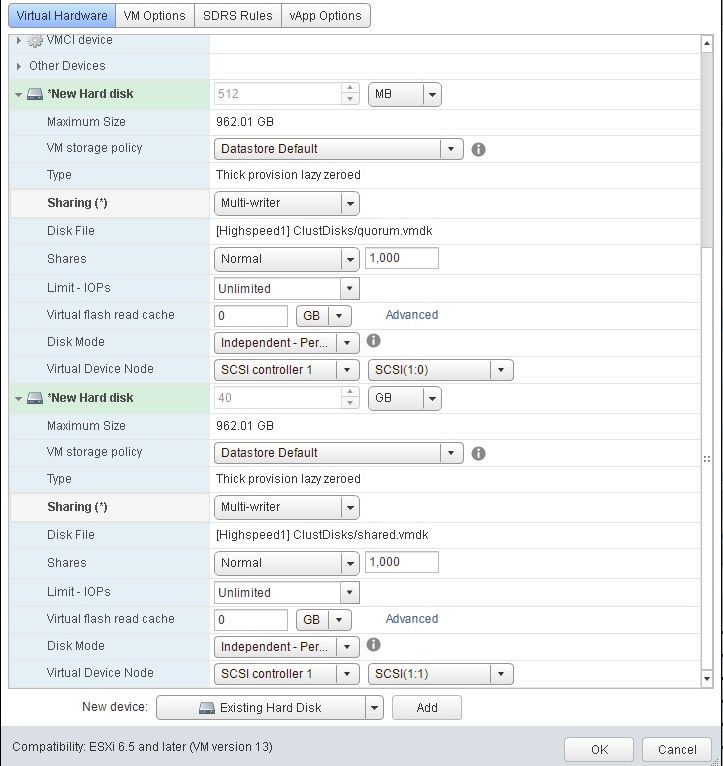

In addition to the two local disks, we created a new SCSI controller (type VMware Paravirtual, virtual sharing enabled) and two shared disks, a 512mb disk to use as Quorum Witness and a larger disk to use for shared data. For this test, we used a 40gb disk, but it should be large enough to meet or exceed anticipated usage (typically 100gb-300gb). These disks are also thick provision eager-zero, defined as Indedependent/Persistent, and attached to the new SCSI controller as Sharing - multi-writer. The 512mb disk is attached to SCSI(1:0) and the 40gb disk is attached to SCSI(1:1).

After creating and formatting the disks on one system, we stopped the VM, moved the disks to a shared folder and renamed them, and then removed the disks from the VM & reattached them (as an existing disk); this step was for house-keeping purposes and is optional.

We also attached the disks (as an existing disk) to the 2nd VM.

Add Failover Clustering Role to Windows

Log on to the two VM's as mydomain\clustadm.

Add the Failover Cluster Role to both systems by selecting Add Roles & Features from the Manage menu of Server Manager. Under Features, select Failover Clustering; this will also install the following features:

[Tools] Failover Cluster Management Tools

[Tools] Failover Cluster Module for Windows PowerShell

You should now see the Failover Cluster Manager in Administrative Tools and the Tools menu of Server Manager.

You may also want to add the Failover Cluster Management Tools to an administrator workstation for monitoring and managing the cluser from a non-cluster machine.

You may want to check for Windows Updates after adding the new feature.

Firewall Exceptions

If the Windows firewall is running, add exceptions for the following:

* port 50000 (Db2 DRDA)

* ports 8080 & 8443 (SQDR Plus Jetty aka SQDR Manager)

* program sqdrsvc.exe (after installation of SQDR)

* the three Remote Service Management rules

The exception for port 50000 (DRDA access to Db2) allows access to Db2 from a remote system using the Client Access Point name.

The exceptions for ports 8080 & 8443 (browser access to SQDR Manager) and to the application sqdrsvc.exe and the three Remote Service Management rules (for use by Data Replicator Manager) allow management and monitoring from an administrator workstation that is not part of the cluster - i.e. an environment that will always be available no matter what is happening with the cluster.

To use Data Replicator Manager from another machine, add the domain user that you use to log on to that workstation to the appropriate local groups (Administrators and Distributed COM Users), as described in the Managing Remote Servers topic of the SQDR Help file.

Create the Cluster

- Start the Failover Cluster Manager in Administrative Tools and the Tools menu of Server Manager.

- In the Failover Cluster Manager, right-click on Failover Cluster Manager and select Create Cluster.

- Add the servers by name.

- Uncheck Validate Cluster (we will validate the cluster later).

- Name the cluster e.g. mycluster.

- Uncheck the box "Add all eligible store to the cluster". We will add the storage later.

If the user lacks sufficient authority on the domain to create a computer object, you will see the following error; see UserIDs above for details on granting domain authority.

Create computer object cluster on domain controller \\mydc.mydomain.com in organizational unit CN=Computers,DC=mydomain,DC=com. A constraint violation occurred.

If the cluster is created successfully, you may ignore the following warning, as we will be adding the quorum disk later:

An appropriate disk was not found for configuring disk witness. The cluster is not configured with a quorum witness. As a best practice, configure a quorum witness to help achieve the highest availability of the cluster.

Add the two shared drives and validate the cluster

- After the cluster is created, expand the tree Storage/Disks and add the 512mb disk.

- Right-click on the cluster name and select More Actions../Configure Cluster Quorum Settings. Use default quorum configuration will assign the 512mb drive as the Quorum Witness.

- Under Storage/Disks, add the 40gb disk. This will be assigned to the Db2 role later.

- If you want to change drive letters for the share drives, select the disk and, in the lower panel, right-click and select Change drive letter. We used the drive letters Q: & S:.

- Right-click on the cluster name and select Validate Cluster. The validation test takes 5-10 minutes to run. You may ignore the warning Disk does not support SCSI-3 Persistent Reservations commands.

Test the Cluster

Under nodes, select Pause/Drain Roles and Resume/Failback and verify that the shared disks transfer between the nodes as expected.

Install Db2 on both systems

- Before installing Db2, verify that that 8.3 naming is enabled on the D: & S: drives, as Db2 may have issues using disks without 8.3 naming enabled.

C:> fsutil 8dot3name query D:

C:> fsutil 8dot3name query S:

C:> fsutil.exe behavior set disable8dot3 0

- Confirm that a domain user to be used for the Db2 services e.g. mydomain\db2admin has been created and added to the local Administrators group.

- Install Db2 on both systems. Choose Custom and perform the following adjustments:

- Install Program Files & ProgramData to a local drive e.g. D:

- Specify the domain account you created above (mydomain\db2admin) for the Db2 services. If the installing user is not a domain administrator, you will get this warning; be sure you have entered the correct credentials and continue the installation.

The domain ID and password cannot be verified because domain administrator privileges are required.

- At Configure Db2 instances, select Configure... and change the Port to 60000, so that the clustered instance (to be created later) can use 50000. You can also set the default instance to not start automatically. The default instance will not be used in this solution.

- Uncheck Enable Operating System Security.

Create the clustered Db2 instance on one system

Make sure that the shared drive S: is currently attached to the first system.

Using a Db2 Administrator Command Window, create the clustered instance and specify its listening port as port 50000:

C:> db2icrt DB2CLUST -s wse -u mydomain\db2admin

Enter current password for mydomain\db2admin:

C:> set DB2INSTANCE=DB2CLUST

C:> db2 update dbm cfg using SVCENAME 50000

C:> db2set DB2COMM=TCPIP

Recycle Db2 with db2stop and db2start and verify that there is an active listener on port 50000 using netstat -an or Task Manager/Resource Manger/Listening ports.

The output of db2ilist should now show 2 local instances:

DB2

DB2CLUST

Do not create the instance on the second system, as this will be done by the db2mscs utility in the next step.

Adding Db2 Role to the cluster using db2mscs

In a Db2 command window (Administrator), run the following command:

C:> db2wolfi i

DB2 Server now appears under Resource Types (right click/Properties of the cluster)

The db2mscs utility is a stand-alone command line utility used to transform a non-MSCS (Microsoft Server Cluster Services) instance into an MSCS instance. The utility will create all MSCS groups, resources, and resource dependencies. It will also copy all Db2 information stored in the Windows registry to the cluster portion of the registry as well as moving the instance directory to a shared cluster disk. The db2mscs utility takes as input a configuration file provided by the user specifying how the cluster should be set up.

The directory \Program Files\IBM\SQLLIB\cfg contains several sample db2mscs configuration files. Since we are using Db2 Workgroup Edition, we used db2mscs.wse. Refer to the comments section of this file for usage.

Copy db2mscs.wse to db2clust.wse in a working directory (e.g. C:\temp) and edit it:

DB2_INSTANCE=DB2CLUST

CLUSTER_NAME=mycluster

GROUP_NAME=DB2ROLE

DISK_NAME=Cluster Disk 1

(or whatever name the shared disk appears as in Cluster Manager)

The value supplied for GROUP_NAME will appear as the role in the cluster manager after completion of db2mscs.

Since we are using a single partition enviroment, we commented out the other parameters.

In a Db2 command window (Administrator), stop Db2 and run db2mscs:

C:> set DB2INSTANCE=DB2CLUST

C:> db2stop

C:> cd \temp

(i.e. the directory where you placed the edited config file)

C:> db2mscs -f db2clust.wse -l mydomain\db2admin

If db2mscs fails, add -d:<debug_file> to the command line and run it again. Be sure that Db2 is stopped; otherwise you might receive return code error of DBI1969 and see the following in the debug file:

DB2MSCS_Migrate_DB2_Instance fnc_errcode 1533

This automatically creates the Profile directory S:\DB2PROFS. The new role (the value of GROUP_NAME) will appear in Cluster Manager under Roles.

If you do not supply the user name (-l) parameter, the Db2 service is created under the local administrator account on the remote machine; i.e. it will be running as Local System Account.

db2ilist should now show that DB2CLUST is a clustered instance:

DB2CLUST C : cluster

DB2

Note that the disk specified in the configuration file is now associated with the role.

On the second system, db2ilist should produce similar output, and a new service DB2CLUST (manual start) appears in the Services control panel.

If you need to undo this operation, use either

db2mscs -u instance_name

or

db2iclus unmigrate

You may need to stop/restart Db2 before the removal completes.

In Cluster Manager, right click on the Role and select Add Resource../Client Access Point. Supply a name e.g. MYDB2. If you are using static IP addresses for Client Access Points, you will also need to supply an IP address on the main network and (if remote access is desired) add an entry to your DNS server. This name can be used to access Db2 from remote systems, regardless of which system in the cluster it is currently running on. If the Windows firewall is enabled, add an exception for port 50000 on both systems.

Test Db2 Failover

Create a sample database:

C:> set DB2INSTANCE=DB2CLUST

C:> db2sampl -dbpath S:

Confirm that you can connect to it locally on the first system (db2 CONNECT TO SAMPLE). You may need to start a new Db2 command window. If desired, confirm that you can connect to it from a remote machine using the name supplied as the Client Access Point as the hostname.

In Cluster Manager, right click on the node for the first system and Select Pause/Drain Roles.

Within a few seconds, you should see that the Role and the shared disk have been transferred to the second system. Verify that you can connect to the SAMPLE database locally on the second system. If you have configured a connection on a remote system, you should be able to connect from there as well. Some applications such IBM Data Studio will automatically reconnect when they detect a disruption in communications.

You can also verify Db2 failover by stopping or rebooting one system and confirming that the Db2 role automatically moves to the other system.

After verifying that Db2 is functioning on the second system, move it back to the first system: In Cluster Manager, right click on the node for the first system and Select Resume/Fail Roles Back.

Set the DB2INSTANCE environment variable and reboot

On both systems:

- Open the System control panel

- Select Advanced system settings

- Select Environment Variables...

- Under System variables, edit DB2INSTANCE - change from DB2 to DB2CLUST.

- Reboot

Install StarQuest products on node 1

In most environments, the StarQuest products are typically installed using the all-in-one combined installer that installs some products silently to their default location (C:\Program Files\StarQuest). For this cluster environment, it was requested that minimal changes be made to the system drive (C:) and that the Program Files of all products be installed to the D: drive. This can be done by using the PRQ.BAT file to install the prerequisite applications (StarSQL, SQDR_ODBC, SQDR, JRE) first; instructions are in the comments of the batch file. Then run SETUP.EXE to install SQDR Plus.

Before installing SQDR Plus, pause the second node to prevent the Cluster Manager from attempting to move the Db2 role when the SQDR Plus temporarily stops Db2. This is only necessary on the initial installation of SQDR Plus. Sometimes, you may notice the Db2 role enter a Failed state; you can restart it within Cluster Manager.

When installing SQDR Plus, make the following choices:

- Location of DB2 databases and logs: S:

- Destination: D:\Program Files\StarQuest\sqdrplus

- ProgramData Folder: Place SQDR Plus (64-bit) Program Data in S:\ProgramData\StarQuest\

- DB2 Instance: DB2CLUST (this should already be selected)

Note that SQDR Plus creates the SQDRC control database used by SQDR - i.e. you should install SQDR Plus before running the SQDR Configuration utility.

Install StarQuest Products on node 2

- Stop the SQDR and SQDR Plus services on node 1 using the Services control panel.

- Transfer the Db2 role and the S: shared disk to the second node by resuming the second node and pausing the first node.

- Optional: If you wish to install products on another drive (e.g. D): rather than the default drive (e.g. C:), use the PRQ.BAT file to install the prerequisite applications (StarSQL, SQDR_ODBC, SQDR, JRE).

- Install SQDR Plus.

Configure SQDR and SQDR Plus on node 1

Stop the SQDR and SQDR Plus services on node 2 using the Services control panel and transfer the Db2 role and the S: shared disk to the first node.

When configuring SQDR, use DSN-less connection strings rather than ODBC data sources for the connection to the SQDRC control database and to the source and destination databases.

- Run the SQDR Configuration utility. This creates control tables in the SQDRC database.

- Set the SQDR service to run as Manual.

- Confirm that you can use Data Replicator Manager to connect to the local SQDR service.

- Restart the SQDR Plus services.

- Using the browser-based SQDR Manager, create an SQDR Plus agent. This will create a Db2 database SQDRPn.

Verify SQDR and SQDR Plus configuration on node 2

- Stop the SQDR and SQDR Plus services on node 1 using the Services control panel and transfer the Db2 role and the S: shared disk to the second node.

- Run SQDR Configuration on node 2, supplying the same information as when run on node 1 (with the exception of choosing Use existing control tables rather than Create new control tables), or copy C:\ProgramData\StarQuest\SQDR\sqdr.properties from node 1 to node 2. This file contains the information that SQDR needs to connect to the SQDRC control database.

- Confirm that you can use Data Replicator Manager to connect to the local SQDR service.

- Start SQDR Plus services and verify that you can see the agent in the browser-based SQDR Manager.

- Stop SQDR and SQDR Plus services and transfer the Db2 role and shared disk back to node 1.

Create cluster role for SQDR Service

- In Cluster Manager, right-click on Roles and Select Configure Role.

- Select Generic service.

- Select the SQDR service (StarQuest Data Replicator).

- Enter a name for the Client Access Point e.g. CLUSTSQDR.

- If you are using static IP addresses instead of DHCP, assign an IP address.

- Continue through the dialogs for storage devices and registry info.

- On completion of the wizard, the creation of the role will take several minutes. You may see the role temporarily transferred to the second node and then back to the first node.

- On each machine, use the sc (service control) command in an elevated command window to add a dependency to the Db2 instance server (e.g. DB2CLUST) on the SQDR service:

C> Sc config sqdrsvc depend=RpcSs/DB2CLUST

Testing the SQDR Role

Assuming that you have enabled the firewall exceptions for sqdrsvc.exe and the three Remote Service Management rules, you should be able to connect to SQDR while running Data Replicator Manager on any machine (from either of the two nodes, or from a administrator workstation). Instead of connecting to the local service, connect to the Client Access Point e.g. CLUSTSQDR.

In Cluster Manager, right click on the node for the first system and select Pause/Drain Roles.

Within a few seconds, you should see that the shared disk and both Roles (DB2CLUST and CLUSTSQDR) are transferred to the second system. Verify that the SQDR service is running on the second system and stopped on the first system, and that you can connect with Data Replicator Manager, either connecting locally on the second system or to the Client Access Point name from any system. If Data Replicator Manager was already running and connected to the Client Access Point name, you may see an RPC error, followed by an automatic reconnection.

Create and test roles for SQDR Plus services

Using Cluster Manager, transfer the Db2 and SQDR service roles back to the first system by right-clicking on the node for the first system and selecting Resume/Fail Roles Back.

Create roles for each of the three SQDR Plus services (SQDR Plus Derby, SQDR Plus Jetty, SQDR Plus Launch Agent) in the same manner as done for the SQDR service: In Cluster Manager, right-click on Roles, Select Configure Roles, select Generic service, select the service, and enter a Client Access Point name and (if necessary) a static IP address.

Make note of the name used for the Jetty service. Use this name instead of localhost or the system name when connecting to SQDR Manager from a browser e.g. http://CLUSTJETTY:8080/SQDRManager.

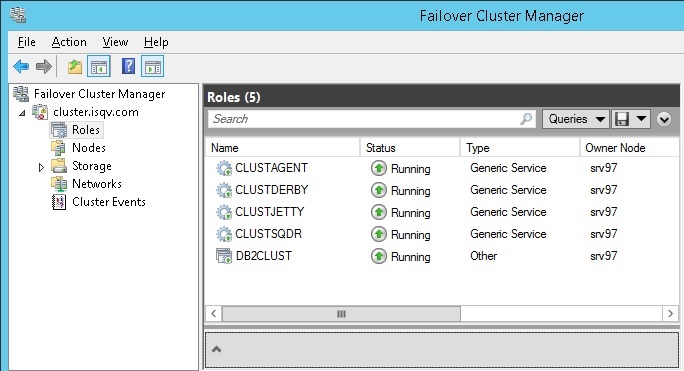

You will now have a total of 5 roles

Select each node and use Pause/Drain Roles to test transferring the entire solution (Db2, SQDR service, SQDR Plus Derby, Jetty, and Capture Agent) between nodes.

Updating StarQuest Products

The purpose of the cluster is to minimize downtime of the SQDR replication solution. Since an update involves shutting down services for a period of time and possibly a reboot, we will perform the update first on the system where the SQDR solution is not running, then transfer the roles to that system while we upgrade the other system.

The SQDR Plus installer requires that the S: drive (the shared drive where we placed the SQDR Plus ProgramData area) be present during an update. However, the S: drive is associated with the active roles, and so must be connected to the active system. The solution is to attach a temporary drive as S: (i.e. a proxy S: drive) in order to satisfy the installer, which will populate a fresh copy of the ProgramData area that can be ignored or discarded. In the procedure below, we created and attached a VHD (a virtual hard disk based on a Windows file); a physical or virtual hard disk supplied by VMWare or HyperV could also be used.

Create a VHD (virtual hard disk):

- Open Computer Management from Administrative Tasks.

- Right-click Disk Management and select Create VHD.

- Browse to a location (e.g. D:\temp) and create a VHD file e.g. SDriveTemp.vhd; Virtual hard disk size 100mb (or more).

If you have just created the VHD, it is automatically attached.

In Disk Management, initialize & format the new virtual drive and mount as S:

- Right-click on the left column (where it says e.g. Disk 4 Basic) & Initialize the disk.

- Right-click on the disk rectangle and select New Simple Volume...

- Continue through the New Simple Volume Wizard.

- At the Assign Drive Letter or Path, select S from the dropdown.

- Enter a volume name e.g. S drive temp.

To re-use a VHD from a previous update:

- Right-click Disk Management and select Attach VHD.

- Browse to the location of the VHD file.

- Assign drive letter S:.

After a temporary S: drive has been attached, update the StarQuest products as usual.

The Services control panel will show that the SQDR Plus services will be started (using the fresh ProgramData area created on the temporary S: drive). Stop all three services by stopping the SQDR Plus Derby service.

Detach the temporary S drive by performing one of the following actions:

- In Disk Management, Right-click on the left column (where it says e.g. Disk 4 Basic) & select Detach VHD.

- In Explorer, right-click on the S: drive and select Eject.

It is also necessary to update the JAR file S:\DB2PROFS\function\jar\SQDR\CAPTUREPROCS_JAR.jar; this should be done on the active system, so that the actual shared drive, not the temporary S: drive is updated. This can be done by either copying the file UDBProcs.jar from the installer media e.g.

C> cd "\temp\sqdrplus64\Program Files 64\StarQuest\sqdrplus\capagent"

C> copy

UDBProcs.jar S:\DB2PROFS\function\jar\SQDR\CAPTUREPROCS_JAR.jar

or using the Db2 replace_jar() function (be sure to issue a COMMIT).

Addition actions may be needed (e.g. restarting Db2) to refresh java classes within Db2.

Use Cluster Manager to transfer the roles to the machine that has just been updated and verify that the solution is working.

Perform the same procedure on the second machine (which is now inactive) - create and attach a temporary S: drive, update the software, stop SQDR Plus services, and detach the temporary S: drive.

Also, restore the SQDRSVC dependency on the Db2 service by running a command like this from an elevated command window (on both systems):

C> Sc config sqdrsvc depend=RpcSs/DB2CLUST

Updating Db2 and Windows

Installing Db2 fix packs and Windows updates is similar to updating StarSQL products: since these updates usually involve stopping Db2 and at least one reboot, perform the update first on the system where the SQDR solution is not running, then transfer the roles to that system while upgrading the other system. It is not necessary to attach a temporary S: drive.

However, performing a version update on Db2 (e.g. from 11.1 to 11.5) is more complicated and will be documented in a future tech doc.

Troubleshooting

Issue: After creating and starting a role (e.g. CLUSTDB2), the status of the role is displayed as Partially Running rather than Running.

Solution: A status of Partially Running means that one or more resources are not available. To identify the missing resources, select the role, right-click, and select More Actions/Show Dependency Report. Then select Resources at the bottom of the display and, if necessary, start the missing resources. For example, we noticed that CLUSTDB2 was partially running, and the report indicated that the network resource and server name were not available. The Resources display showed that the network resource and server name were offline. After starting the network resource and server name, the status changed to Running.

Issue: the following error dialog is displayed when logging on to the inactive system:

SQL5005C: The operation failed because the database manager failed to access either the database manager configuration file or the database configuration file.

Solution: This error is related to the Db2 systray application, a Windows operating system notify icon which monitors the status of a Db2 database service and provides a visual indication of when the service is started and stopped, as well as the ability to start and stop the service. This application is not needed and can be disabled from automatically starting at logon by using regedit to remove the registry value

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows\CurrentVersion\Run\DB2COPY1 - db2systray.exe DB2

You may also be able to use db2systray –auto or db2systray –clean. See the IBM documentation for systray for details.

References

- Microsoft Windows Server Failover Clustering on VMware vSphere 6.x: Guidelines for supported configurations

- Microsoft Windows Server Failover Clustering (WSFC) with shared disks on VMware vSphere 7.x: Guidelines for supported configurations

- Setup for Failover Clustering and Microsoft Cluster Service (VMware vSphere 6.5)

- Setup for Failover Clustering and Microsoft Cluster Service (VMware vSphere 6.7)

- Setup for Failover Clustering and Microsoft Cluster Service (VMware vSphere 7.0)

- Creating a Windows Server 2012 Failover Cluster

- High Availability and Disaster Recovery Options for DB2 for Linux, UNIX, and Windows - IBM Redbook SG24-7363

- Implementing IBM DB2 9.7 Enterprise Server Edition with Microsoft Failover Clustering

Several of these references are based on older versions of Windows, Db2, or VMware, so particular details may vary, though the concepts are the same.

DISCLAIMER

The information in technical documents comes without any warranty or applicability for a specific purpose. The author(s) or distributor(s) will not accept responsibility for any damage incurred directly or indirectly through use of the information contained in these documents. The instructions may need to be modified to be appropriate for the hardware and software that has been installed and configured within a particular organization. The information in technical documents should be considered only as an example and may include information from various sources, including IBM, Microsoft, and other organizations.